Designing a DynamoDB table for scalability is paramount in modern application architecture, particularly for those demanding high availability and performance. This exploration delves into the intricate art of crafting DynamoDB tables, meticulously examining the critical elements that underpin scalability, from the fundamental principles of horizontal scaling to the nuanced strategies for optimizing read and write operations. We’ll dissect data modeling techniques, capacity planning methodologies, and indexing strategies, equipping you with the knowledge to build robust and efficient data solutions.

This document serves as a guide, systematically breaking down the complexities of DynamoDB design. We will explore various aspects, including data modeling best practices for e-commerce platforms, selecting the right partition and sort keys for social media applications, and managing large items. We will also investigate the use of indexes, optimizing read and write operations, and handling throttling errors. Through real-world examples and case studies, this guide provides a practical understanding of DynamoDB’s capabilities and how to leverage them effectively.

Understanding the Core Principles of DynamoDB for Scalability

DynamoDB, a fully managed NoSQL database service, is designed for high availability and scalability. Its architecture inherently supports massive scale by automatically managing the underlying infrastructure, allowing developers to focus on application development rather than database administration. Understanding these core principles is crucial for designing DynamoDB tables that can handle increasing workloads without performance degradation.

Horizontal Scaling in DynamoDB

Horizontal scaling, the ability to add more resources to handle increased load, is a fundamental characteristic of DynamoDB. Unlike vertical scaling, which involves increasing the resources of a single server, horizontal scaling allows DynamoDB to distribute data and workload across multiple servers or partitions.

- DynamoDB achieves horizontal scaling through partitioning. Data is automatically divided and stored across multiple physical servers, referred to as partitions. Each partition is responsible for a subset of the data, allowing for parallel processing of read and write requests.

- As the workload increases, DynamoDB automatically adds more partitions to handle the increased traffic. This automatic scaling eliminates the need for manual intervention and ensures consistent performance, even during traffic spikes.

- The capacity of a DynamoDB table is determined by the provisioned read capacity units (RCUs) and write capacity units (WCUs). DynamoDB utilizes these units to scale the underlying infrastructure based on the actual read and write traffic.

DynamoDB Architecture and Automatic Scaling

DynamoDB’s architecture is designed to provide automatic scaling and high availability. This architecture relies on several key components working in concert to manage data storage, request routing, and capacity allocation.

- Partitioning: DynamoDB partitions data based on the partition key attribute of each item. Items with the same partition key are stored on the same partition. This mechanism enables efficient data retrieval and distribution across the underlying infrastructure. The number of partitions dynamically increases as the table’s data size or traffic grows.

- Data Replication: DynamoDB replicates data across multiple Availability Zones within a region. This replication provides high availability and fault tolerance. If a partition becomes unavailable, DynamoDB automatically fails over to a replica in another Availability Zone, ensuring data durability.

- Capacity Management: DynamoDB uses provisioned capacity mode or on-demand capacity mode to manage the resources allocated to a table. In provisioned capacity mode, users specify the RCUs and WCUs required. DynamoDB automatically scales the underlying infrastructure to meet the provisioned capacity. On-demand capacity mode automatically scales the capacity based on the incoming traffic without requiring users to specify RCUs or WCUs.

- Request Routing: DynamoDB uses a distributed request routing layer to direct incoming requests to the appropriate partitions. This layer analyzes the partition key of each request and routes it to the correct physical server. The routing layer ensures that requests are distributed evenly across partitions.

Partitions, Read Capacity Units (RCUs), and Write Capacity Units (WCUs)

The interplay between partitions, RCUs, and WCUs is crucial for understanding DynamoDB’s scalability and performance characteristics. Proper configuration of these elements ensures optimal resource utilization and responsiveness.

- Partitions: Partitions are the fundamental unit of storage and throughput in DynamoDB. Each partition has a limited capacity for reads and writes. The number of partitions is dynamically adjusted by DynamoDB based on the table’s data size and traffic.

- Read Capacity Units (RCUs): RCUs represent the number of strongly consistent reads per second or the number of eventually consistent reads per second that a table can perform. One RCU represents one strongly consistent read per second for items up to 4 KB in size, or two eventually consistent reads per second for items up to 4 KB in size.

For example, if a table is provisioned with 10 RCUs and all reads are strongly consistent, it can perform 10 reads per second for items up to 4KB in size.

- Write Capacity Units (WCUs): WCUs represent the number of write operations per second that a table can perform. One WCU represents one write operation per second for items up to 1 KB in size.

For example, if a table is provisioned with 5 WCUs, it can perform 5 write operations per second for items up to 1 KB in size.

- Relationship: The provisioned RCUs and WCUs determine the throughput capacity of a DynamoDB table. DynamoDB automatically scales the underlying infrastructure to meet the provisioned capacity. If the actual traffic exceeds the provisioned capacity, DynamoDB throttles requests.

- Impact of Partition Key: The choice of partition key significantly impacts the performance and scalability of a DynamoDB table. A poorly chosen partition key can lead to hot partitions, where a single partition receives a disproportionate amount of traffic, limiting overall performance. It’s crucial to select a partition key that distributes data evenly across partitions. For instance, using a timestamp as a partition key can lead to hot partitions if the data access pattern is time-based, where all the writes go to the same partition for the most recent timestamp.

Instead, using a composite key or a key with a random prefix can distribute the load more evenly.

Data Modeling Best Practices for DynamoDB

Designing an effective data model is paramount for achieving scalability and performance in DynamoDB. A well-crafted model minimizes read and write costs, maximizes query efficiency, and facilitates future growth. The choice of data modeling techniques significantly impacts the application’s ability to handle increasing workloads and evolving data requirements. This section delves into practical strategies and techniques for optimizing data models within the DynamoDB environment.

Designing a Data Model for an E-commerce Platform

Creating a data model for an e-commerce platform necessitates careful consideration of product listings, customer orders, and related entities. This involves determining the appropriate partition and sort keys, as well as the relationships between different data elements.

- Product Listing Attributes: Each product listing should include attributes such as product ID (primary key), product name, description, price, category, image URLs, and inventory count. These attributes enable efficient searching and filtering based on product characteristics.

- Customer Order Attributes: Customer orders should encompass attributes like order ID (primary key), customer ID, order date, order status (e.g., pending, shipped, delivered), total amount, shipping address, and a list of ordered product IDs with quantities. This structure supports order tracking and customer order history retrieval.

- Customer Attributes: Customer data can be stored separately, including customer ID (primary key), name, email address, shipping addresses, and order history (potentially using a GSI for efficient order lookup by customer). This facilitates customer management and personalization.

- Relationships:

- One-to-Many (Customer to Orders): One customer can have multiple orders.

- One-to-Many (Product to Reviews): One product can have multiple reviews.

- Many-to-Many (Orders to Products): One order can contain multiple products, and one product can be part of multiple orders. This is typically handled through a junction table or by embedding product information within the order.

- Example Data (Simplified):

- Product: `”productId”: “P123”, “productName”: “Laptop X”, “price”: 1200, “category”: “Electronics”, “inventory”: 10`

- Order: `”orderId”: “O456”, “customerId”: “C789”, “orderDate”: “2024-01-20”, “orderItems”: [“productId”: “P123”, “quantity”: 1, “productId”: “P456”, “quantity”: 2]`

- Customer: `”customerId”: “C789”, “name”: “Alice Smith”, “email”: “[email protected]”`

Data Modeling Techniques and Their Trade-offs

Several data modeling techniques are available for DynamoDB, each with its own set of advantages and disadvantages. Understanding these trade-offs is crucial for selecting the most appropriate approach for a specific use case.

- Single-Table Design: This approach consolidates all related data into a single table. The primary key is typically composed of a partition key and a sort key, allowing for flexible data organization and efficient querying of related data.

- Pros: Reduced read and write costs (fewer tables to manage), simplified application logic, efficient querying of related data using a single table scan or index.

- Cons: More complex data modeling initially, potential for large item sizes, requires careful consideration of partition key design to avoid hot keys.

- Denormalization: Denormalization involves duplicating data across multiple items or tables to optimize read performance. This technique is particularly useful for frequently accessed data that can be efficiently replicated.

- Pros: Faster read operations (reduced joins), improved query performance for frequently accessed data.

- Cons: Increased write costs (data duplication), potential for data inconsistency, requires careful data synchronization strategies.

- Normalization: Normalization involves storing data in separate tables and using relationships (e.g., foreign keys) to link them. While this approach is common in relational databases, it can lead to performance issues in DynamoDB due to the need for multiple read operations.

- Pros: Data consistency (reduced data duplication), simpler data updates.

- Cons: Slower read operations (requires multiple reads), increased complexity for joins, increased read capacity units (RCUs).

- Global Secondary Indexes (GSIs): GSIs provide alternative views of the data, allowing for efficient querying based on different attributes. This technique is crucial for supporting various access patterns.

- Pros: Enables efficient querying on non-key attributes, supports flexible data access patterns.

- Cons: Increased write costs (updates to GSIs), potential for eventual consistency issues, requires careful planning of index attributes.

Applying the “One-to-Many” Relationship in DynamoDB

The “one-to-many” relationship, such as a customer having multiple orders, can be effectively modeled in DynamoDB using a single-table design. The primary key is often composed of a partition key (e.g., customer ID) and a sort key (e.g., order ID). This design allows for efficient retrieval of all orders associated with a specific customer.

| Partition Key (customerId) | Sort Key (SK) | Data Type | Description |

|---|---|---|---|

| C123 | CUSTOMER#C123 | String | Customer Information (e.g., Name, Address) |

| C123 | ORDER#O456 | String | Order Details (e.g., Order Date, Total Amount) |

| C123 | ORDER#O789 | String | Order Details (e.g., Order Date, Total Amount) |

| C456 | CUSTOMER#C456 | String | Customer Information (e.g., Name, Address) |

Choosing the Right Partition Key and Sort Key

Selecting appropriate partition and sort keys is crucial for maximizing the performance and scalability of a DynamoDB table. These keys dictate how data is distributed and accessed, directly impacting read and write throughput, cost efficiency, and overall application responsiveness. A poorly designed key schema can lead to hotspots, uneven data distribution, and performance bottlenecks, negating the benefits of DynamoDB’s underlying architecture.

Significance of Partition Key Optimization

The partition key, also known as the hash key, is the primary key component in DynamoDB. It determines the logical partition where an item is stored. DynamoDB uses the partition key’s value to calculate a hash value, which then determines the physical storage location of the item across the underlying storage nodes. A well-chosen partition key ensures even data distribution across these nodes, preventing hotspots and maximizing the potential for parallel processing of read and write requests.* A well-chosen partition key offers several key advantages:

- Even Data Distribution: The partition key’s hash value should distribute data evenly across all partitions. This even distribution ensures that no single partition becomes overloaded with requests, preventing performance bottlenecks. This is directly related to the concept of horizontal scaling, a cornerstone of DynamoDB’s architecture.

- Optimized Read Performance: With an appropriate partition key, retrieving a single item (using `GetItem`) is highly efficient. DynamoDB can quickly locate the item by hashing the partition key value. This results in low-latency read operations.

- Optimized Write Performance: Similarly, writing a new item is fast because DynamoDB knows exactly where to store the data based on the partition key’s hash. Even data distribution helps prevent throttling during periods of high write activity.

- Scalability: By distributing data across multiple partitions, the table can scale to handle significantly higher read and write throughput. DynamoDB automatically manages the allocation of partitions based on demand.

Conversely, a poor choice can cause severe performance degradation. If all items are written to the same partition (due to a partition key with limited variation), that partition becomes a bottleneck, leading to throttling and impacting overall application performance.

Impact of Sort Key on Data Organization and Query Efficiency

The sort key, also known as the range key, is the secondary key component in DynamoDB. It is used in conjunction with the partition key to uniquely identify an item within a table. The sort key provides a mechanism for organizing items within a partition, enabling efficient querying and filtering of data. The sort key’s data type can be string, number, or binary.* The sort key significantly influences data organization and query efficiency:

- Data Ordering: Items within a partition are stored in sorted order based on the sort key value. This enables efficient range queries (e.g., finding all items within a specific date range).

- Efficient Querying: When combined with the partition key, the sort key enables efficient querying using the `Query` operation. This allows you to retrieve items based on specific criteria related to the sort key (e.g., filtering by date, price, or other numerical or textual attributes).

- Complex Data Relationships: The sort key facilitates the modeling of relationships between items. For instance, in a social media application, you could use the user ID as the partition key and the timestamp of a post as the sort key. This allows you to retrieve all posts for a specific user in chronological order.

- Composite Primary Keys: The combination of partition key and sort key creates a composite primary key. This unique combination is crucial for item identification and data integrity.

Without a sort key, you would only be able to retrieve data based on the partition key, which is limiting for many use cases.

Partition Key and Sort Key Selection for a Social Media Application

Consider a social media application storing user posts and comments. The primary goal is to efficiently retrieve posts for a specific user, display posts in chronological order, and retrieve comments associated with a specific post.* The following key design is effective:

- Partition Key: `UserID` (e.g., a unique identifier for each user).

- Sort Key: `Timestamp` (e.g., the date and time the post or comment was created).

This design provides several advantages:

- Retrieving User Posts: To retrieve all posts for a specific user, you would use the `UserID` in the `Query` operation as the partition key and query the sort key (`Timestamp`) without a filter (or with a filter to get posts within a specific time range). This is a very efficient operation.

- Displaying Posts Chronologically: The sort key (`Timestamp`) ensures that posts are returned in chronological order.

- Retrieving Comments for a Post: This design can be extended to comments. A comment could have a primary key composed of the `PostID` as the partition key and the `CommentTimestamp` as the sort key. This design facilitates efficient retrieval of comments for a specific post.

- Scalability and Performance: The `UserID` as the partition key allows for horizontal scaling as the number of users and posts increases. DynamoDB will distribute the data across multiple partitions.

This design avoids hotspots because the partition key is highly variable (unique `UserID` values). The sort key provides order, allowing for efficient retrieval and filtering based on the timestamp.

Capacity Planning and Provisioning

Effective capacity planning and provisioning are crucial for maintaining the performance, cost-effectiveness, and scalability of a DynamoDB table. This involves understanding the different capacity modes, accurately estimating resource requirements, and implementing a monitoring and adjustment strategy to accommodate changing workloads. Failing to properly provision capacity can lead to throttling, impacting application performance, or over-provisioning, resulting in unnecessary costs.

Provisioned vs. On-Demand Capacity Modes

DynamoDB offers two primary capacity modes: provisioned and on-demand. Each mode caters to different workload characteristics and offers distinct advantages and disadvantages.The following points describe the key differences:

- Provisioned Capacity: In this mode, you explicitly specify the read capacity units (RCUs) and write capacity units (WCUs) that your table requires. This mode is suitable for predictable workloads where the read and write patterns are relatively consistent. It offers cost optimization when the workload is steady and known. However, it requires careful planning to avoid under-provisioning (leading to throttling) or over-provisioning (leading to wasted resources and higher costs).

You can adjust the provisioned capacity as needed, but this takes time, and during adjustments, performance might be impacted.

- On-Demand Capacity: With on-demand capacity, DynamoDB automatically scales the read and write capacity based on the actual traffic your table receives. This mode is ideal for unpredictable workloads with fluctuating read and write patterns, such as applications with infrequent spikes or those that are difficult to forecast. It eliminates the need for capacity planning, as DynamoDB handles the scaling automatically. However, it generally costs more than provisioned capacity, especially for sustained, predictable workloads.

On-demand also has a “cold start” penalty, where the initial requests after a period of inactivity might experience slightly higher latency.

Estimating RCUs and WCUs

Accurately estimating the required RCUs and WCUs is vital for optimal performance and cost management. This involves analyzing the expected read and write patterns of your application, considering item sizes, and understanding the consistency requirements.The following steps Artikel a method for estimating capacity:

- Analyze Read and Write Patterns: Determine the expected number of reads and writes per second. This requires understanding your application’s usage patterns and traffic forecasts. For instance, if your application is expected to handle 100 reads and 50 writes per second at peak, start with these numbers.

- Calculate Item Size: Determine the average size of the items you will store in DynamoDB. Item size impacts the number of RCUs and WCUs required, as larger items consume more capacity units. For example, consider that the average item size is 1 KB.

- Determine Consistency Requirements: Decide on the desired read consistency. Strong consistency requires more RCUs than eventual consistency. For example, if you require strong consistency for all reads, you will need to factor in the associated capacity costs.

- Calculate RCUs:

- For strongly consistent reads:

RCUs = (Number of reads per second

– Item size in KB) / 4 KBFor example, with 100 strongly consistent reads per second and an average item size of 1 KB:

RCUs = (100

– 1) / 4 = 25 RCUs - For eventually consistent reads:

RCUs = (Number of reads per second

– Item size in KB) / 4 KBFor example, with 100 eventually consistent reads per second and an average item size of 1 KB:

RCUs = (100

– 1) / 4 = 12.5 RCUs. Since you can’t have fractions of RCUs, round up to 13 RCUs.

- For strongly consistent reads:

- Calculate WCUs:

- For writes:

WCUs = (Number of writes per second

– Item size in KB) / 1 KBFor example, with 50 writes per second and an average item size of 1 KB:

WCUs = (50

– 1) / 1 = 50 WCUs

- For writes:

- Factor in Spikes and Growth: Account for potential traffic spikes and future growth by adding a buffer to your estimated RCUs and WCUs. A common practice is to add a 20-30% buffer to account for unexpected peaks or future growth.

- Test and Iterate: Deploy your table with the estimated capacity and monitor its performance. Adjust the capacity based on the actual observed read and write patterns.

For instance, consider an e-commerce application. During a flash sale, the application anticipates a surge in read and write activity. Let’s say the expected peak load involves 1000 strongly consistent reads per second and 200 writes per second, with an average item size of 2 KB. Using the formulas above:* RCUs = (1000

- 2) / 4 = 500 RCUs

- WCUs = (200

- 2) / 1 = 400 WCUs

Adding a 25% buffer for unexpected peaks:* Provisioned RCUs = 500

- 1.25 = 625 RCUs

- Provisioned WCUs = 400

- 1.25 = 500 WCUs

This provides a starting point. Continuous monitoring and adjustment are necessary.

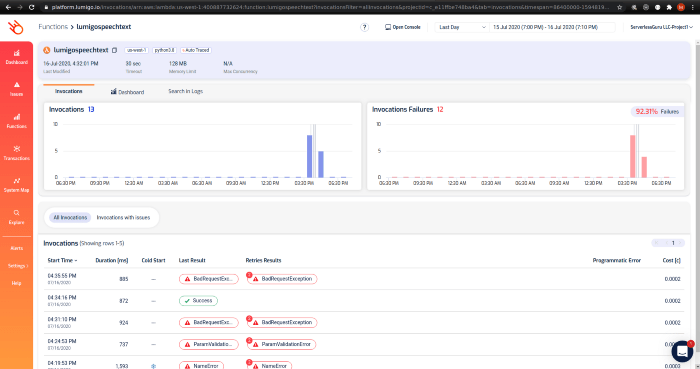

Monitoring and Adjusting Capacity

Regular monitoring and adjustment are crucial for maintaining optimal performance and cost-effectiveness. This involves tracking key metrics, setting up alerts, and proactively adjusting capacity as needed.The following steps describe the procedure:

- Monitor Key Metrics: Use CloudWatch to monitor the following metrics:

- ConsumedReadCapacityUnits: The number of read capacity units consumed by your table.

- ConsumedWriteCapacityUnits: The number of write capacity units consumed by your table.

- ProvisionedReadCapacityUnits: The provisioned read capacity units.

- ProvisionedWriteCapacityUnits: The provisioned write capacity units.

- ThrottledReadRequests: The number of throttled read requests.

- ThrottledWriteRequests: The number of throttled write requests.

- SuccessfulRequestLatency: The latency of successful requests.

- Set Up Alerts: Configure CloudWatch alarms to trigger notifications when certain thresholds are exceeded. For example:

- Alert when ConsumedReadCapacityUnits or ConsumedWriteCapacityUnits consistently exceeds 70-80% of the provisioned capacity.

- Alert when ThrottledReadRequests or ThrottledWriteRequests occur.

- Alert when SuccessfulRequestLatency exceeds acceptable thresholds.

- Analyze Trends: Regularly review the CloudWatch metrics to identify trends and patterns in resource consumption. This helps you anticipate future capacity needs.

- Adjust Capacity: When alerts are triggered or trends indicate a need for adjustment, modify the provisioned capacity.

- Increase Capacity: If you observe throttling or consistently high utilization, increase the provisioned RCUs and/or WCUs. You can increase capacity in increments of 1 RCU/WCU or in larger increments, depending on the needs. Be aware that increasing capacity takes some time to propagate.

- Decrease Capacity: If the utilization is consistently low, consider decreasing the provisioned RCUs and/or WCUs to optimize costs. However, be cautious about decreasing capacity too aggressively, as this could lead to throttling during peak periods.

- Automate Capacity Management: Consider using AWS Auto Scaling to automatically adjust capacity based on predefined rules and thresholds. This can help to automate the capacity management process and ensure optimal performance.

- Review and Refine: Regularly review and refine your capacity planning and monitoring strategy. As your application evolves and traffic patterns change, adjust your capacity estimates, monitoring metrics, and alerting thresholds.

For example, an application experiencing consistently high read utilization might trigger an alarm when ConsumedReadCapacityUnits exceeds 80% of the ProvisionedReadCapacityUnits. The operations team would then analyze the trends, determine the appropriate increase in RCUs, and implement the change. Subsequently, they would monitor the impact of the change, confirming that throttling is reduced, and latency remains within acceptable limits. This iterative process ensures optimal performance and cost efficiency.

Indexing Strategies for Efficient Queries

DynamoDB’s indexing capabilities are crucial for optimizing query performance and enabling flexible data access patterns. Indexes allow for querying data based on attributes other than the primary key, offering significant advantages in terms of data retrieval speed and versatility. However, the use of indexes introduces trade-offs that must be carefully considered to ensure efficient and cost-effective data management.

Types of Indexes Available in DynamoDB

DynamoDB offers two primary types of indexes: Global Secondary Indexes (GSIs) and Local Secondary Indexes (LSIs). Understanding the characteristics of each type is essential for selecting the appropriate indexing strategy.

- Global Secondary Indexes (GSIs): GSIs provide a way to query data based on attributes that are not part of the table’s primary key. They are essentially independent tables, each containing a projection of the base table’s data and a different key schema. GSIs offer flexibility in terms of query patterns, as they allow you to define new partition keys and sort keys based on your application’s needs.

They can be created or deleted at any time, and they don’t have to be created when the table is created. When data in the base table changes, DynamoDB automatically updates the corresponding GSIs.

- Local Secondary Indexes (LSIs): LSIs, on the other hand, are tightly coupled with the base table and share the same partition key. They can only be created when the base table is created, and they provide a different sort key for a specific partition key value. LSIs offer a way to query data within a specific partition based on a different sort key attribute.

The size of an LSI is limited by the size of the base table’s partition. They are most useful when you want to query data within a specific logical grouping.

Trade-offs Involved in Using Indexes

While indexes enhance query performance, they introduce several trade-offs that must be carefully evaluated. These include storage costs, write performance impacts, and the complexity of managing index updates.

- Storage Costs: Each index requires additional storage space to store its data. The amount of storage depends on the size of the data projected into the index and the number of indexed attributes. For GSIs, storage costs are calculated based on the size of the GSI’s data, which is a subset of the base table’s data, potentially including the primary key and any projected attributes.

The more indexes you create, and the more attributes you project into those indexes, the higher your storage costs will be.

- Write Performance Impacts: When you write data to a table with indexes, DynamoDB must update both the base table and the indexes. This can increase write latency and consume more write capacity units (WCUs). The impact on write performance is proportional to the number of indexes and the size of the data being written. Each index requires additional write operations. For example, if a write operation updates a base table and two GSIs, the write operation consumes three times the WCU compared to writing only to the base table.

- Read Consistency: By default, reads from GSIs are eventually consistent. This means that the data in the GSI may not reflect the most recent updates in the base table immediately. This is because the updates to the GSI are performed asynchronously. For applications that require strong consistency, you can use the `ConsistentRead` parameter, but this will consume more read capacity units.

- Index Maintenance Overhead: Managing indexes adds complexity to your data model. You need to consider the number of indexes, the attributes you index, and the data projection for each index. Deleting or modifying indexes can also impact your application’s performance and availability.

Example of a Global Secondary Index for User Profiles

Consider a table storing user profiles with the following attributes: `userId` (partition key), `username` (sort key), `email`, `firstName`, `lastName`, and `creationDate`. We want to enable queries by both username and email. A GSI can be created to facilitate these queries.

Base Table: UserProfiles

Primary Key: userId (Partition Key), username (Sort Key)

Attributes: userId (String), username (String), email (String), firstName (String), lastName (String), creationDate (String)

GSI 1: `UsernameIndex`

- Partition Key: `username`

- Sort Key: `userId`

- Projected Attributes: `email`, `firstName`, `lastName`, `creationDate`

GSI 2: `EmailIndex`

- Partition Key: `email`

- Sort Key: `userId`

- Projected Attributes: `username`, `firstName`, `lastName`, `creationDate`

Explanation:

This configuration allows for efficient queries by username and email. For example, to find a user by their username, you can query the `UsernameIndex` using the username as the partition key and the userId as the sort key. Similarly, to find a user by their email, you can query the `EmailIndex` using the email as the partition key and the userId as the sort key.

The projected attributes in each GSI provide the necessary information for retrieving user details without requiring additional lookups to the base table. This design improves query performance for common user profile lookup scenarios. It’s essential to provision appropriate read capacity units (RCUs) for each GSI to handle the expected query load.

Optimizing Read and Write Operations

Optimizing read and write operations is crucial for achieving high performance and cost-efficiency in DynamoDB. This section details techniques for minimizing latency, maximizing throughput, and ensuring the resilience of your applications against throttling and other potential issues. Effective optimization strategies directly impact the scalability and responsiveness of your DynamoDB-backed services.

Reducing Read Latency

Read latency can be significantly reduced by leveraging DynamoDB’s features and architectural considerations. Strategies include utilizing eventually consistent reads and implementing caching mechanisms. These techniques help balance performance and consistency requirements.

- Eventually Consistent Reads: By default, DynamoDB provides strongly consistent reads, guaranteeing the most up-to-date data. However, this comes at a higher cost and potentially increased latency. Switching to eventually consistent reads, which may return data that is slightly out of date, offers a substantial performance improvement. This is particularly beneficial for applications where absolute consistency is not critical, such as displaying user profiles or activity feeds.

- Caching Strategies: Implementing caching, either at the application level or through services like Amazon ElastiCache, can drastically reduce read latency. Caching frequently accessed data avoids repeated reads from DynamoDB, lowering both latency and provisioned read capacity costs.

- Application-Level Caching: Application-level caching involves storing frequently accessed data within the application’s memory. This is suitable for small datasets or scenarios where the data changes infrequently.

- ElastiCache for DynamoDB: Amazon ElastiCache provides a fully managed in-memory caching service that can be used to cache DynamoDB data. ElastiCache offers several caching strategies, including read-through and write-through caching, to ensure data consistency and reduce latency. ElastiCache can also handle complex caching invalidation scenarios, minimizing the risk of serving stale data.

Optimizing Write Performance

Optimizing write performance in DynamoDB is essential for handling high write throughput and ensuring data durability. Techniques include batch writes and careful consideration of item size.

- Batch Writes: The `BatchWriteItem` API allows writing multiple items in a single request, significantly improving write throughput compared to individual `PutItem` or `DeleteItem` operations. Batch writes reduce the overhead of network round trips and improve the overall efficiency of write operations. The maximum number of items that can be written in a single `BatchWriteItem` request is 25, with a maximum item size of 400KB.

BatchWriteItem Request Limit: Maximum 25 items per request.

- Item Size Considerations: The size of items stored in DynamoDB affects write performance. Larger items consume more write capacity units (WCUs) and can increase latency. Optimizing data models to minimize item size can improve write throughput.

- Data Compression: For large attribute values, consider compressing the data before storing it in DynamoDB. This reduces the item size and improves write performance.

Common compression algorithms like GZIP or Snappy can be employed.

- Attribute Selection: Only store the necessary attributes for a given use case. Avoid including unnecessary data in items to reduce their overall size.

- Data Compression: For large attribute values, consider compressing the data before storing it in DynamoDB. This reduces the item size and improves write performance.

Handling Throttling Errors and Implementing Retries

DynamoDB can throttle write operations if the provisioned write capacity is exceeded. Implementing a robust error handling strategy, including retries, is essential for maintaining application availability and ensuring data consistency.

- Throttling Error Detection: Identify throttling errors by examining the HTTP status code and the error message returned by DynamoDB. The `ProvisionedThroughputExceededException` indicates that the provisioned write capacity has been exceeded.

- Retry Mechanism: Implement a retry mechanism with exponential backoff to handle throttling errors. Exponential backoff involves increasing the delay between retries, which allows the write capacity to recover. The retry logic should include a maximum number of retries to prevent indefinite retries and potential data inconsistencies.

- Exponential Backoff Formula: The delay between retries can be calculated using the following formula:

`delay = base

– (2 ^ attempt)`Where:

- `base` is the initial delay (e.g., 100 milliseconds).

- `attempt` is the number of retry attempts.

- Jitter: Introduce a small amount of random jitter to the delay to avoid all clients retrying simultaneously, which could exacerbate the throttling issue.

- Exponential Backoff Formula: The delay between retries can be calculated using the following formula:

- Monitoring and Alerting: Monitor the number of throttling errors and set up alerts to proactively identify and address capacity issues. Monitoring metrics such as `ConsumedWriteCapacityUnits` and `ProvisionedWriteCapacityUnits` helps in identifying potential bottlenecks and scaling resources accordingly. Amazon CloudWatch can be used to create dashboards and set up alarms.

Handling Large Items and Data Compression

Managing large items and optimizing storage efficiency are critical aspects of DynamoDB design, especially when dealing with datasets that exceed the service’s default size limits. This section details strategies for handling items larger than 400KB and employing data compression techniques to improve storage and performance.

Managing Items Exceeding the 400KB Size Limit

DynamoDB imposes a 400KB size limit on individual items. When an item’s attributes collectively exceed this limit, alternative approaches are necessary to accommodate the data.

- Attribute Splitting: Divide large attributes into smaller, related attributes. This strategy involves breaking down a single, large attribute into multiple attributes within the same DynamoDB item. For example, a large text field containing a document can be split into sections or pages, each stored as a separate attribute. This approach maintains data within the same item, preserving the atomicity of operations but potentially increasing the number of attributes.

- Using Amazon S3: Store large item attributes in Amazon S3 and store a reference (e.g., S3 object key) within the DynamoDB item. This approach is the most common solution for large binary or text data. DynamoDB stores the metadata, while S3 handles the storage of the bulk data. This minimizes the size of the DynamoDB item, improving performance and cost efficiency. For example, a large image file could be stored in S3, and the DynamoDB item would contain the S3 object key, along with other relevant metadata like the image’s description, upload date, and user ID.

- Combining Splitting and S3: Employ a hybrid approach where some parts of a large attribute are split into smaller attributes within DynamoDB, and the remaining large portions are stored in S3. This method optimizes storage based on the nature of the data.

Implementing Data Compression within DynamoDB

Data compression can significantly reduce the storage space required by items and improve read/write performance, particularly for text-based attributes.

- Compression Algorithms: Choose a suitable compression algorithm. Common options include:

- GZIP: A widely used compression algorithm that offers a good balance between compression ratio and speed.

- DEFLATE: The underlying algorithm used by GZIP, providing similar compression characteristics.

- LZ4: A fast compression algorithm suitable for scenarios where speed is critical.

- Compression Process:

- Before writing data to DynamoDB, compress the relevant attribute(s) using the chosen algorithm.

- Store the compressed data within the DynamoDB item.

- When reading the data, decompress the attribute to retrieve the original data.

- Considerations:

- CPU Overhead: Compression and decompression operations consume CPU resources. Evaluate the impact on application performance, especially for high-volume read/write operations.

- Storage Savings: Assess the potential storage savings by measuring the compression ratio achieved with different algorithms and data types.

- Data Type Suitability: Compression is most effective for text-based attributes, such as JSON documents, XML files, or large strings. Binary data may not compress as effectively.

Using Amazon S3 to Store Large Item Attributes, Referencing them within the DynamoDB Table

Storing large item attributes in S3 and referencing them within DynamoDB is a common and effective strategy for handling large data volumes.

- Procedure:

- Upload to S3: When an application needs to store a large attribute (e.g., a video, a large JSON document), it first uploads the data to an S3 bucket.

- Obtain S3 Object Key: Upon successful upload, the application receives an S3 object key, which uniquely identifies the object within the S3 bucket.

- Store Metadata in DynamoDB: Create or update a DynamoDB item to store metadata about the large object, including:

- The S3 object key (e.g., `s3://my-bucket/path/to/object.mp4`).

- Other relevant metadata, such as the object’s size, content type, upload date, and any associated tags or identifiers.

- The user ID or any other relevant identifiers.

- Retrieve Data: When the application needs to access the large attribute, it retrieves the DynamoDB item and uses the S3 object key to access the object from S3.

- Benefits:

- Scalability: S3 is designed for high scalability and can handle virtually unlimited amounts of data.

- Cost Efficiency: S3 storage is generally more cost-effective for large data volumes than storing data directly in DynamoDB.

- Performance: Accessing large objects from S3 can be faster than storing them in DynamoDB, especially for read operations.

- Data Durability: S3 provides high data durability through object replication and data redundancy.

- Example: Consider a system storing user profiles with large images.

- The user uploads a profile picture.

- The application uploads the image to an S3 bucket, obtaining the S3 object key (e.g., `s3://my-image-bucket/user/123/profile.jpg`).

- The application creates a DynamoDB item for the user, storing the S3 object key in an attribute called `profile_image_s3_key`. Other attributes, such as `user_id`, `username`, and `profile_description`, are stored directly in DynamoDB.

- When the application needs to display the user’s profile, it retrieves the DynamoDB item and uses the `profile_image_s3_key` to fetch the image from S3.

Monitoring and Troubleshooting DynamoDB Performance

Effective monitoring and proactive troubleshooting are crucial for maintaining optimal performance and scalability in DynamoDB. By continuously observing key metrics and implementing appropriate strategies, potential bottlenecks can be identified and addressed before they impact application performance. This section Artikels the critical aspects of monitoring and troubleshooting DynamoDB, including essential metrics, common issues, and practical solutions.

Key Metrics for DynamoDB Performance Monitoring

Monitoring DynamoDB performance involves tracking several key metrics to understand table behavior and identify potential issues. These metrics, accessible through AWS CloudWatch, provide insights into resource utilization, request patterns, and potential performance bottlenecks.

- Consumed Capacity Units (CCU): This metric represents the amount of read and write capacity consumed by the table. Monitoring CCU helps to determine if the provisioned capacity is sufficient for the workload. A high CCU indicates that the table is approaching or exceeding its provisioned capacity, which can lead to throttling.

- Throttled Requests: Throttled requests occur when the table exceeds its provisioned read or write capacity. Monitoring the number of throttled requests is critical for identifying capacity issues. A high number of throttled requests indicates that the table needs more provisioned capacity or that the application needs to be optimized to reduce the number of requests.

- Successful Requests: This metric tracks the number of successful read and write requests. A decrease in successful requests, coupled with an increase in throttled requests, is a strong indicator of a capacity problem.

- Latency: Latency measures the time it takes for a request to be processed. High latency can indicate performance bottlenecks, such as insufficient provisioned capacity, inefficient queries, or network issues. Monitoring read and write latencies separately provides more granular insights.

- ItemCount: The number of items stored in the table. Monitoring ItemCount helps to understand the data volume and the impact on storage and query performance.

- CacheHitCount/CacheMissCount: For tables with DAX (DynamoDB Accelerator) enabled, these metrics indicate the effectiveness of the caching layer. High cache hit rates improve performance by reducing the load on the DynamoDB table.

- User Errors: The number of errors generated by user actions, such as invalid requests or access denied errors. Monitoring user errors can help identify application-level issues that affect DynamoDB performance.

Common Performance Bottlenecks and Solutions

Identifying and addressing common performance bottlenecks is essential for maintaining optimal DynamoDB performance. Several factors can contribute to performance issues, and understanding these issues is crucial for effective troubleshooting.

- Insufficient Provisioned Capacity: This is one of the most common causes of performance bottlenecks. If the provisioned read or write capacity is insufficient for the workload, requests will be throttled.

- Solution: Increase the provisioned read or write capacity. Consider using auto-scaling to automatically adjust capacity based on demand.

- Inefficient Queries: Queries that scan large portions of the table or use inefficient indexes can negatively impact performance.

- Solution: Optimize queries by using appropriate partition and sort keys, designing efficient indexes, and avoiding full table scans.

- Hot Partitioning: When a disproportionate amount of read or write traffic is directed to a single partition, it can lead to throttling and performance degradation.

- Solution: Choose a partition key that distributes data evenly across partitions. Consider using composite keys or adding randomness to the partition key to avoid hot partitioning.

- Large Items: Storing large items in DynamoDB can increase latency and consume more capacity units.

- Solution: Optimize item sizes by compressing data or storing large objects in Amazon S3 and referencing them in DynamoDB.

- Network Issues: Network latency or connectivity problems can impact DynamoDB performance.

- Solution: Ensure that the application and DynamoDB are in the same AWS region to minimize network latency. Monitor network connectivity and troubleshoot any network-related issues.

- Application-Level Issues: Inefficient application code or design can contribute to performance bottlenecks.

- Solution: Review the application code for inefficient DynamoDB operations, such as excessive reads or writes. Optimize the application’s request patterns to reduce the load on DynamoDB.

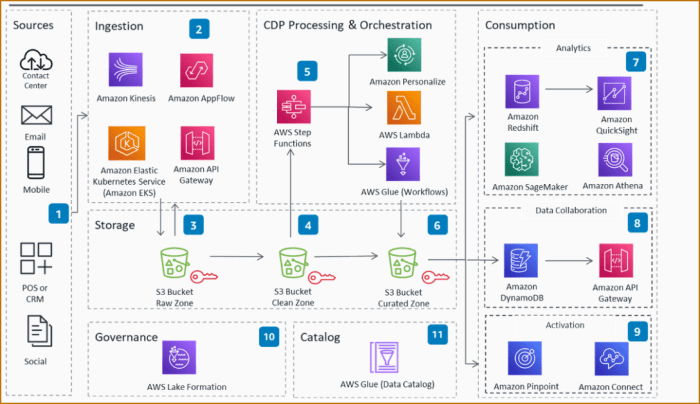

Setting Up Alerts and Monitoring with AWS CloudWatch

AWS CloudWatch provides a comprehensive monitoring service for DynamoDB, allowing users to track metrics, set up alerts, and visualize performance data. Proactive monitoring and alerting are essential for identifying and resolving performance issues promptly.

- Accessing CloudWatch:

- Navigate to the AWS Management Console and select CloudWatch.

- Selecting DynamoDB Metrics:

- In the CloudWatch console, select “Metrics” and then “DynamoDB”.

- Choose the specific metrics you want to monitor (e.g., ConsumedReadCapacityUnits, ConsumedWriteCapacityUnits, ThrottledRequests).

- Creating CloudWatch Dashboards:

- Create custom dashboards to visualize key DynamoDB metrics over time. This provides a centralized view of table performance.

- Add relevant metrics to the dashboard, such as Consumed Capacity Units, Throttled Requests, and Latency.

- Setting Up CloudWatch Alarms:

- Create CloudWatch alarms to trigger notifications when specific thresholds are exceeded.

- For example, set an alarm to trigger when ThrottledRequests exceed a certain number within a defined period.

- Configure the alarm to send notifications to an SNS topic.

- Configuring SNS Notifications:

- Create an SNS topic to receive notifications from CloudWatch alarms.

- Subscribe to the SNS topic to receive notifications via email, SMS, or other supported methods.

- Analyzing Alarm Triggers:

- When an alarm triggers, review the CloudWatch metrics to identify the root cause of the issue.

- Use the CloudWatch logs and application logs to investigate the issue further.

Example:Consider a retail application using DynamoDB to store product information. The application’s DynamoDB table experiences an unexpected surge in traffic during a flash sale. As a result, the `ThrottledRequests` metric increases significantly, and the application’s performance degrades. Using CloudWatch, the following steps are implemented:

1. Metric Monitoring

A CloudWatch dashboard is created to display `ConsumedWriteCapacityUnits`, `ThrottledWriteRequests`, and `WriteLatency`.

2. Alarm Configuration

An alarm is set up to trigger when `ThrottledWriteRequests` exceeds 100 within a 5-minute period. The alarm sends a notification to an SNS topic.

3. Alert Response

When the alarm triggers, an email notification is sent to the operations team. The team investigates the issue and discovers that the provisioned write capacity is insufficient for the increased traffic.

4. Resolution

The operations team increases the provisioned write capacity of the DynamoDB table, resolving the throttling issue and restoring application performance. This example highlights the importance of proactive monitoring and alerting for maintaining DynamoDB performance during peak traffic periods.

Data Versioning and Conflict Resolution

Handling concurrent updates and ensuring data integrity are crucial aspects of designing scalable DynamoDB tables. As multiple users or applications access and modify data simultaneously, the potential for conflicts increases. Implementing robust versioning and conflict resolution mechanisms becomes essential to maintain data consistency and prevent data loss. This section details strategies for managing concurrent updates in DynamoDB.

Handling Concurrent Updates Using Optimistic Locking

Optimistic locking is a strategy that allows multiple clients to read and modify data without acquiring explicit locks. It assumes that conflicts are infrequent and handles them when they occur. This approach is more efficient than pessimistic locking, which can lead to contention and reduced throughput.

- Implementation Details: Optimistic locking relies on a version attribute within the item. This attribute, typically an integer or a timestamp, is incremented with each update. When a client reads an item, it also reads the version attribute. When the client attempts to update the item, it includes the version attribute in the `ConditionExpression` of the `UpdateItem` API call.

- Conditional Writes: The `ConditionExpression` specifies that the update should only proceed if the version attribute in the database matches the version attribute the client read. If the versions match, the update is applied, and the version attribute is incremented. If the versions do not match, the update fails, indicating a conflict. The client can then re-read the item, apply its changes again, and retry the update.

- Example: Consider an item with a `version` attribute initialized to 1.

- Client A reads the item and sees `version = 1`.

- Client B reads the item and sees `version = 1`.

- Client A updates the item and sets `ConditionExpression` to `version = :expected_version`, where `:expected_version` is 1. The update succeeds, and `version` becomes 2.

- Client B, now trying to update the item with `ConditionExpression` to `version = :expected_version`, where `:expected_version` is 1, will fail because the current `version` is 2. Client B must re-read the item, apply its changes, and retry the update with the correct version.

- Benefits: Optimistic locking improves performance by avoiding the overhead of pessimistic locking. It allows for higher throughput and scalability, as multiple clients can concurrently access and modify data without blocking each other.

- Limitations: Optimistic locking requires clients to handle potential conflicts. It is best suited for scenarios where conflicts are infrequent. Frequent conflicts can lead to increased retry attempts and reduced overall performance.

Implementing Data Versioning to Track Changes Over Time

Data versioning allows tracking changes to items over time, enabling auditing, rollback capabilities, and the ability to analyze data evolution. This is particularly useful in scenarios where the history of data modifications is important.

- Versioning Strategies: Several approaches can be used to implement data versioning in DynamoDB.

- Appending Versions: Maintain a list or a set attribute within the item to store previous versions. Each time an item is updated, the previous version is appended to this list. This approach is suitable for a limited number of versions, as the size of the attribute is constrained by DynamoDB’s item size limit.

- Separate Version Table: Create a separate DynamoDB table to store the history of changes. Each entry in the version table would contain the item’s primary key, the version number, the updated attributes, and a timestamp. This approach is more scalable and allows for storing an unlimited number of versions.

- Using Streams and Lambda: Utilize DynamoDB Streams to capture all changes to the main table. A Lambda function can then process these stream records and store the previous item states in a separate version table or in a dedicated attribute within the item.

- Example: Implementing a separate version table strategy.

- Create a version table with the following attributes:

- `PrimaryKey`: The original item’s primary key (e.g., `userId`).

- `VersionNumber`: A sort key representing the version number (e.g., an integer or timestamp).

- `Data`: A JSON object containing the previous item’s attributes.

- `Timestamp`: The timestamp of the version creation.

- When an item is updated in the main table, a Lambda function triggered by DynamoDB Streams inserts a new record into the version table. This record contains the original item’s primary key, a new `VersionNumber`, the previous item’s data, and a timestamp.

- To retrieve a specific version, query the version table using the primary key and `VersionNumber`.

- Create a version table with the following attributes:

- Considerations: When implementing data versioning, consider the storage costs, query performance, and the complexity of managing multiple tables or attributes. The choice of versioning strategy depends on the specific requirements of the application.

Using Conditional Writes to Prevent Data Conflicts

Conditional writes are a fundamental mechanism for ensuring data consistency in DynamoDB. They allow you to specify conditions that must be met before an item is updated or created. This is a powerful tool for preventing data conflicts and maintaining data integrity.

- `ConditionExpression` and `ExpressionAttributeValues`: The `ConditionExpression` attribute of the `PutItem`, `UpdateItem`, and `DeleteItem` API calls specifies a condition that must evaluate to true for the operation to succeed. The `ExpressionAttributeValues` attribute provides the values used in the `ConditionExpression`.

- Preventing Overwrites: Conditional writes can be used to prevent overwriting existing data. For example, to prevent the creation of an item if an item with the same primary key already exists, you can use a `ConditionExpression` that checks for the absence of the primary key.

ConditionExpression: "attribute_not_exists(PrimaryKeyAttribute)" - Checking Attribute Values: Conditional writes can be used to check the values of existing attributes before updating an item. This is essential for optimistic locking and other conflict resolution strategies.

ConditionExpression: "attribute_exists(version) AND version = :expectedVersion" - Handling Concurrent Updates: Conditional writes can be used in conjunction with optimistic locking to handle concurrent updates. The `ConditionExpression` checks the version attribute to ensure that the update is based on the latest version of the item. If the version does not match, the update fails, and the client can retry the operation.

- Example: Preventing an item from being created if it already exists.

- Use the `PutItem` API call with the following parameters:

- `TableName`: The name of the table.

- `Item`: The item to be created.

- `ConditionExpression`: `attribute_not_exists(userId)`

- `ExpressionAttributeValues`: `”:userId”: S: “someUserId”` (This assumes the primary key attribute is `userId`).

- If an item with the specified `userId` already exists, the `PutItem` call will fail, preventing the creation of a duplicate item.

- Use the `PutItem` API call with the following parameters:

- Best Practices: Carefully design the `ConditionExpression` to ensure it accurately reflects the desired conditions. Use appropriate `ExpressionAttributeNames` and `ExpressionAttributeValues` to make the code more readable and maintainable. Always handle potential errors and exceptions when using conditional writes.

Real-World Examples and Case Studies

To effectively understand DynamoDB’s scalability and design principles, it is crucial to examine real-world applications. Analyzing how companies have successfully implemented DynamoDB, addressing challenges, and optimizing performance provides valuable insights. This section presents case studies and comparative analyses to illustrate practical DynamoDB deployments.

Case Study: Mobile Gaming Platform

A prominent mobile gaming platform, with millions of daily active users, adopted DynamoDB to manage player profiles, game state, and leaderboard data. This platform needed a highly scalable and performant database solution to handle the fluctuating demands of its user base, especially during peak gaming hours and new game releases.The design choices made by the platform’s engineering team included:

- Data Modeling: The platform used a single-table design approach. All related data for a player (profile, inventory, game progress) was stored within a single DynamoDB item, leveraging the flexible schema of DynamoDB. This design optimized for reads by co-locating related data and reduced the number of queries required to retrieve player information.

- Partition Key and Sort Key Selection: The partition key was the player’s unique ID. The sort key was designed to represent different types of player data. For example, the sort key could use a prefix to differentiate between player profile information, inventory items, and game progress details. This approach allowed for efficient querying of specific data types for each player.

- Indexing Strategies: The platform extensively used Global Secondary Indexes (GSIs) to support various query patterns, such as searching players by username or retrieving the top players on a leaderboard. GSIs were carefully designed to optimize query performance and minimize the impact on write operations.

- Capacity Planning and Provisioning: The team implemented auto-scaling to dynamically adjust the provisioned capacity based on traffic patterns. They closely monitored the consumed read and write capacity units (RCUs and WCUs) to ensure optimal performance and cost efficiency. This was particularly crucial during periods of high user activity.

- Challenges and Solutions: One significant challenge was managing the size of individual items, especially for players with large inventories. The solution involved:

- Data Compression: Applying data compression techniques to reduce the storage size of large inventory lists.

- Pagination: Implementing pagination for retrieving large inventory data, avoiding exceeding the maximum item size limits.

The mobile gaming platform experienced significant improvements in scalability, performance, and cost-effectiveness after migrating to DynamoDB. The platform can now handle millions of concurrent users with minimal latency, and the flexible design allowed them to rapidly iterate on new game features and updates.

Comparative Analysis of DynamoDB Table Designs for Different Use Cases

DynamoDB table designs must be tailored to the specific requirements of each application. Different use cases necessitate varying data models, indexing strategies, and capacity planning approaches.

- Gaming:

- Data Model: Typically, a single-table design is favored for its efficiency in retrieving related data. Player profiles, game state, and inventory data are often stored within a single item.

- Indexing: GSIs are essential for leaderboards, searching players, and filtering game data.

- Capacity Planning: Auto-scaling is crucial to handle peak loads during game events and new releases.

- Example: The mobile gaming platform case study described above illustrates this design.

- IoT (Internet of Things):

- Data Model: Time-series data modeling is common, where sensor readings are stored with timestamps. This often involves using the device ID as the partition key and the timestamp as the sort key.

- Indexing: Local Secondary Indexes (LSIs) are used to enable queries over different attributes within the same partition (e.g., filtering sensor data by a specific time range). GSIs may be used for more complex queries across devices.

- Capacity Planning: Write capacity is typically the primary concern due to the high volume of incoming data. Read capacity needs to be sufficient to handle data analysis and reporting.

- Example: An application monitoring environmental sensors could store data from each sensor with the sensor ID as the partition key and the timestamp as the sort key. This allows for efficient retrieval of data for a specific sensor over a given time period.

- Social Media:

- Data Model: Data models vary based on the application’s features. Some designs may use a single-table approach, while others might use multiple tables to separate user profiles, posts, and relationships.

- Indexing: GSIs are essential for searching users, hashtags, and trending topics.

- Capacity Planning: Both read and write capacity are important. The application needs to handle high write throughput for posts and comments, as well as high read throughput for news feeds and user profiles.

- Example: A social media platform could store user profiles with user IDs as partition keys and profile attributes as attributes. Posts could be stored with a post ID as the partition key and a timestamp as the sort key. GSIs can be created to allow searching posts by hashtags or users.

The selection of the right DynamoDB design depends heavily on the specific use case. Each design has its own advantages and disadvantages. The key is to understand the access patterns, data volume, and query requirements of the application.

Concluding Remarks

In conclusion, mastering the art of DynamoDB table design is essential for achieving scalability and performance in data-intensive applications. By understanding the core principles, data modeling techniques, capacity planning, and indexing strategies, developers can create DynamoDB tables that effectively handle growing data volumes and user traffic. From optimizing read and write operations to handling large items and implementing data versioning, this guide provides a comprehensive framework for building scalable and reliable data solutions with DynamoDB.

By embracing these principles, developers can unlock the full potential of DynamoDB and create applications that thrive in demanding environments.

Frequently Asked Questions

What is the maximum item size in DynamoDB?

The maximum item size in DynamoDB is 400KB. Attributes exceeding this limit require alternative storage methods, such as Amazon S3, with references in the DynamoDB table.

How does DynamoDB handle data consistency?

DynamoDB offers both eventually consistent and strongly consistent reads. Eventually consistent reads provide higher performance but may return stale data. Strongly consistent reads guarantee the latest data but may have higher latency. The choice depends on the application’s consistency requirements.

What are the costs associated with DynamoDB?

DynamoDB costs are primarily based on provisioned or on-demand capacity, data storage, data transfer, and index usage. Provisioned capacity involves paying for the read and write capacity units allocated to a table, while on-demand capacity charges based on actual usage. Indexing and data transfer also contribute to the overall cost.

How do I monitor the performance of my DynamoDB table?

AWS CloudWatch provides comprehensive monitoring capabilities for DynamoDB. Key metrics to monitor include consumed read/write capacity, throttled requests, latency, and error rates. Setting up CloudWatch alarms allows for proactive identification of performance bottlenecks and capacity adjustments.

Can I change the partition key of a DynamoDB table after it’s created?

No, the partition key of a DynamoDB table cannot be changed after the table is created. If a change is required, the data must be migrated to a new table with the desired partition key. The sort key can be updated by migrating data.